AI-Powered Usability Testing

From Kanban automation to sophisticated multi-agent usability testing. How I built a Figma plugin that delivers comprehensive usability insights in 2-3 minutes.

Introduction

As I was getting deeper into Claude Code and building AI-powered workflows, I wanted to tackle one of the biggest bottlenecks in our design process: usability testing. Designers were struggling to get quick validation on their ideas, and testing methods created delays that slowed down iteration cycles.

What started as a simple Kanban board automation evolved into a sophisticated multi-agent system that can deliver comprehensive usability testing in minutes instead of weeks.

The Problem

Our design process had a clear bottleneck: getting usability feedback took too long.

Designers needed quick validation on their ideas but were stuck with time-consuming options:

- Guerrilla testing: Required coordinating with real users and scheduling

- Expert reviews: Limited by availability of senior designers and researchers

- Formal usability studies: Great for final validation but too heavy for iterative design

- Internal feedback: Fast but limited in perspective and often biased

This meant designers were either shipping without proper validation or spending days waiting for feedback on concepts that needed rapid iteration. We needed something between "no testing" and "formal research study."

The Original Approach

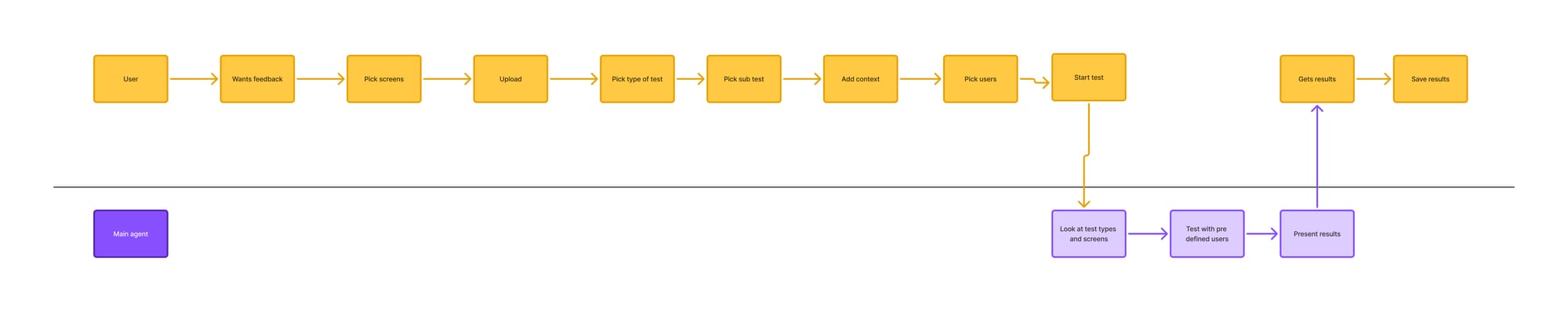

My first instinct was to build an agent that worked within our Kanban board. The idea was simple: as soon as a design moved from "In Progress" to "Ready for Testing," an automated agent would:

- Pull the design files

- Run usability analysis

- Email results to the team

- Move the card to the next stage

I started building this with Claude Code, working on the prompts and automation workflows. It seemed logical—automate the handoff between design and testing.

The Pivot Moment

Then, while having lunch with my co collaborator Patrick Dorfer, it hit me: We were solving the wrong problem.

Instead of automating testing at the end of design, what if we enabled testing throughout the design process? Let designers test early and often, right where they were already working.

After lunch, I sat down and mapped out how a Figma plugin could work instead. Rather than waiting for designs to be "ready," designers could get feedback while they were still iterating.

Building the First Version

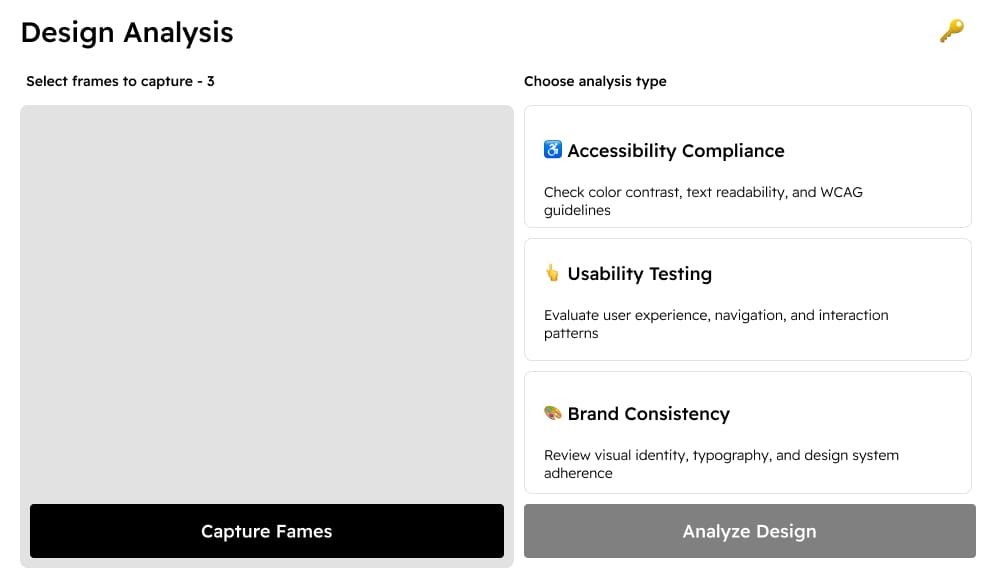

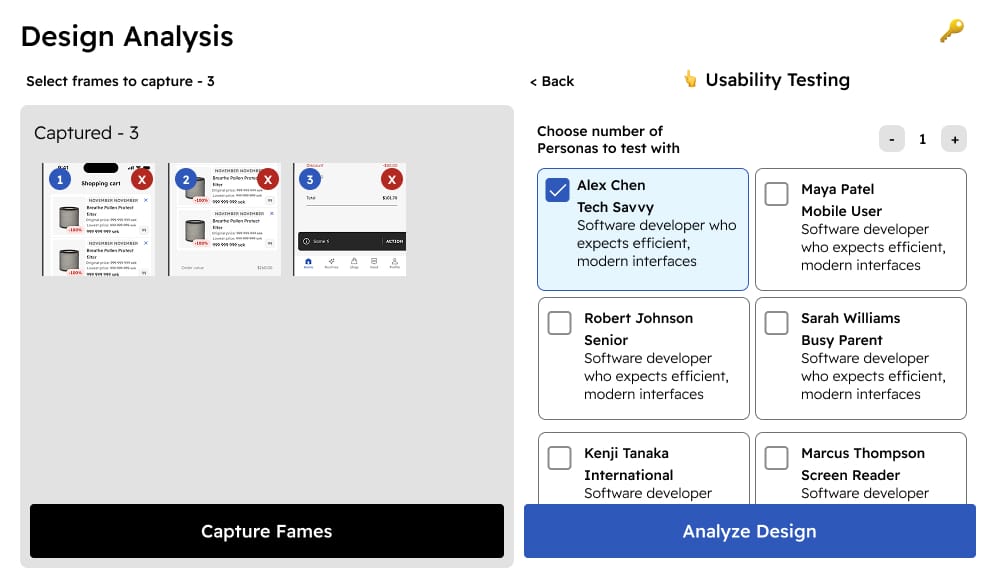

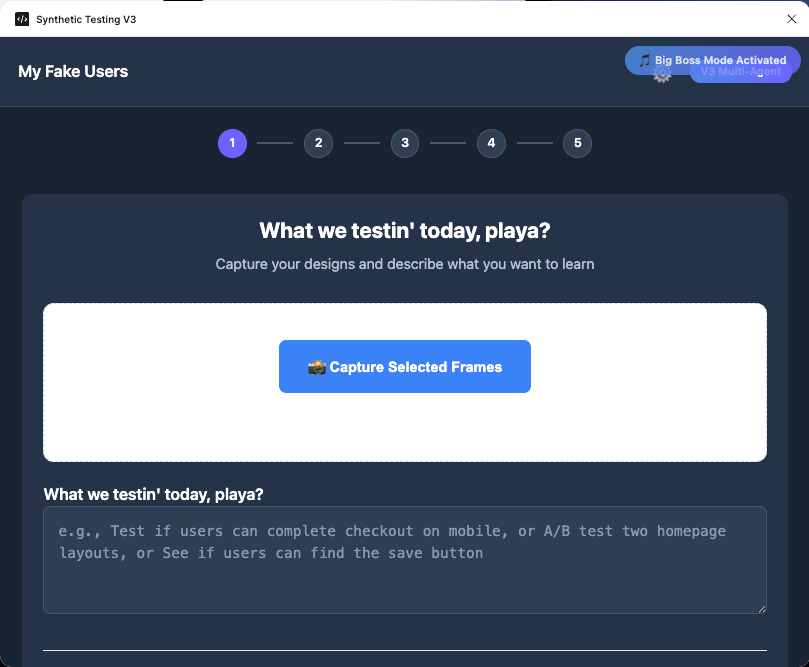

I started with a single-agent system, breaking it down into manageable steps:

- Screen detection: Getting Figma to identify and extract design screens

- Test definition: Letting designers specify what they wanted to test

- Analysis engine: Running usability evaluation on the screens

- Results presentation: Delivering actionable feedback within Figma

The first iteration was promising. Our user researchers ga `ve feedback that this was getting close to replacing guerrilla testing for early-stage validation. But I noticed the system felt limited—there were opportunities to make it much more robust.

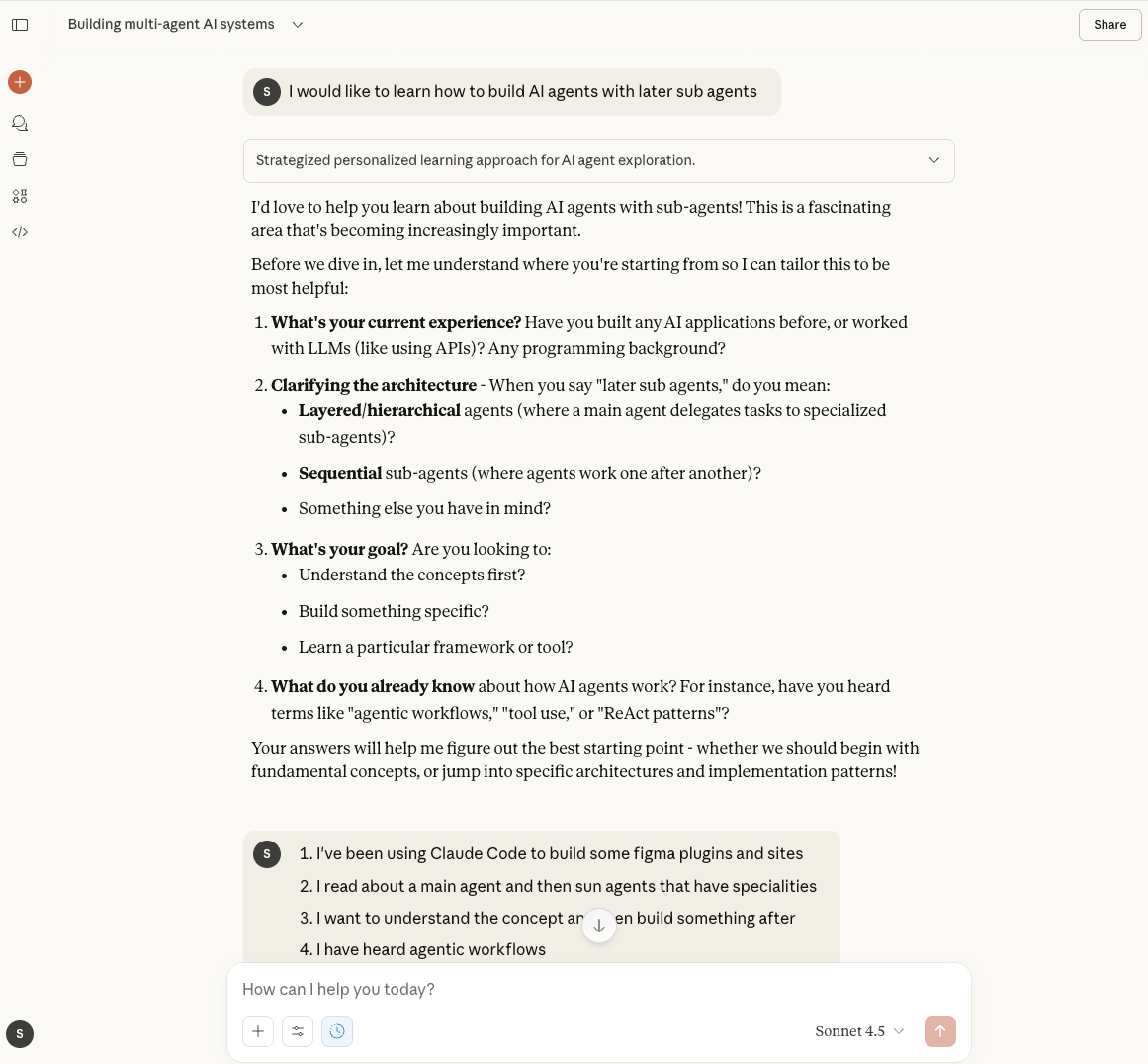

Learning Multi-Agent Systems

Although the plug in worked, I felt it could be simpler. Armed with early positive feedback, I went back to Claude put it in learning mode about multi-agent systems. Through a combination of Claude Code experimentation and direct learning with Claude, I started understanding how to build more sophisticated AI architectures.

The Multi-Agent Solution

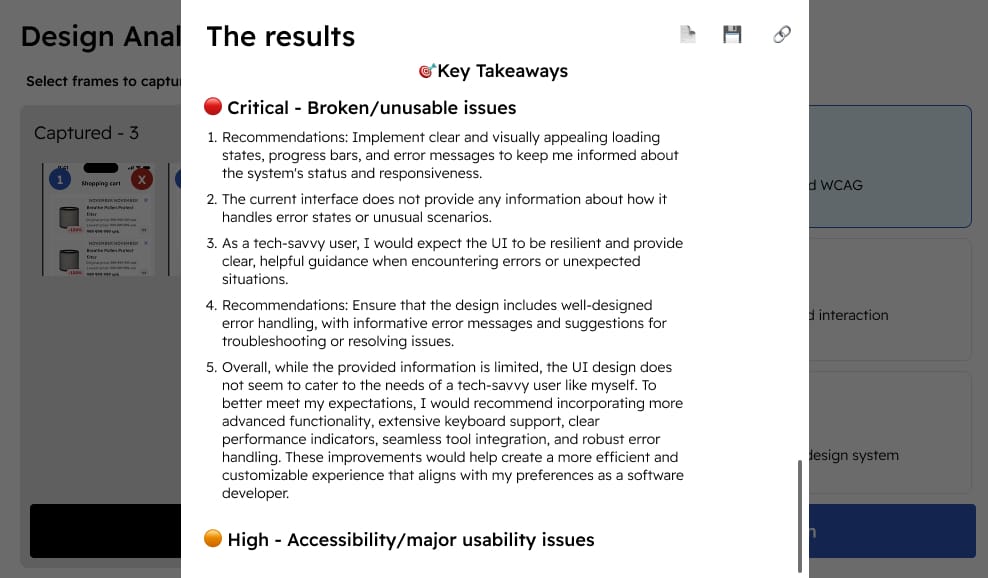

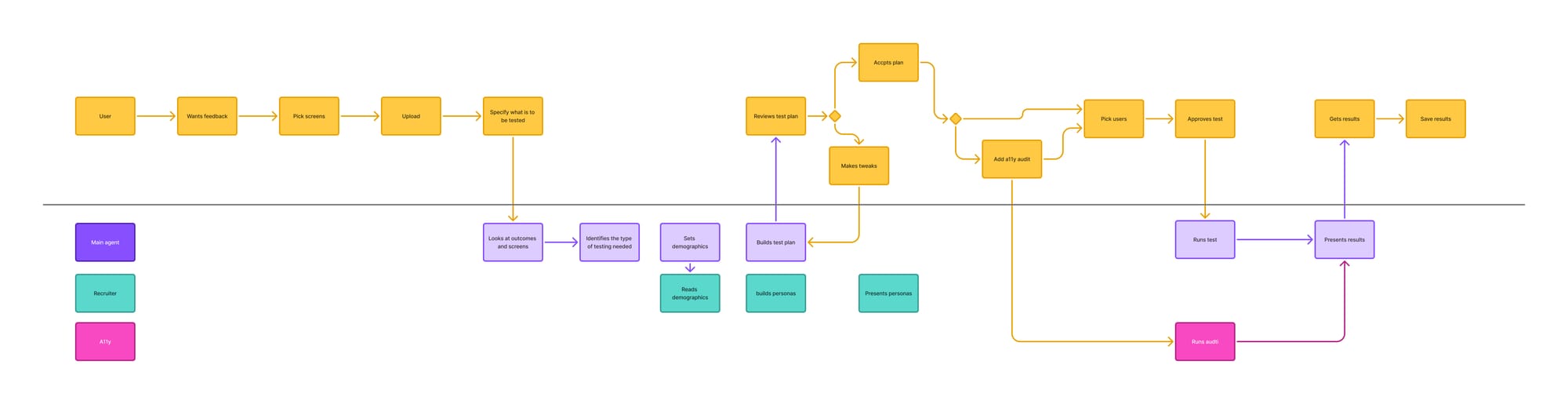

The new system mimics how real usability testing works, with specialized agents for each role:

The User Researcher (Orchestration Agent)

Acts as the main interface—designers upload their screens and describe what they want to test. It creates a comprehensive test plan and coordinates the other agents.

The Recruiter Agent

Builds diverse personas and anti-personas to ensure good spectrum coverage, including special cases for accessibility testing. This ensures we're not just testing for one type of user.

The Accessibility Agent

Specialized in WCAG standards, providing comprehensive accessibility audits alongside general usability feedback.

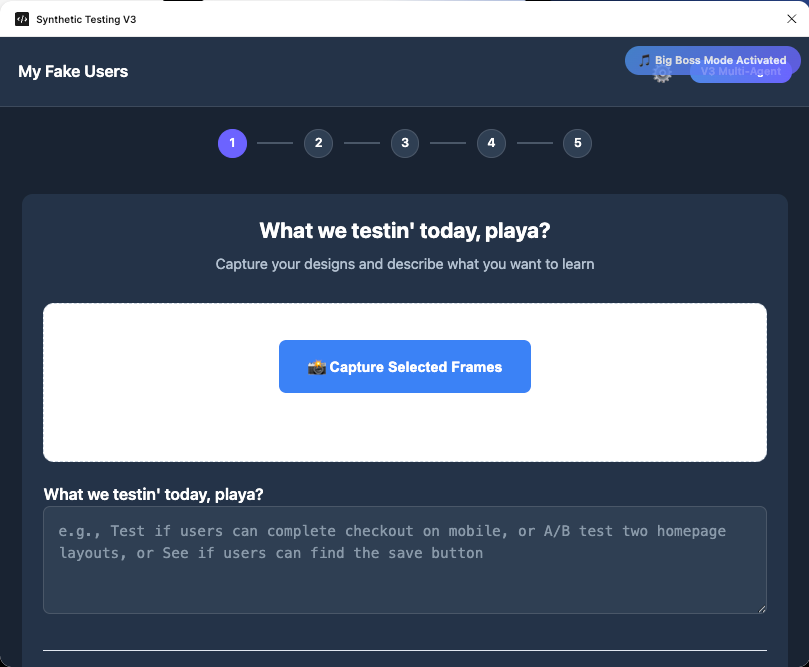

The Designer Workflow

The process is designed to feel natural and fast:

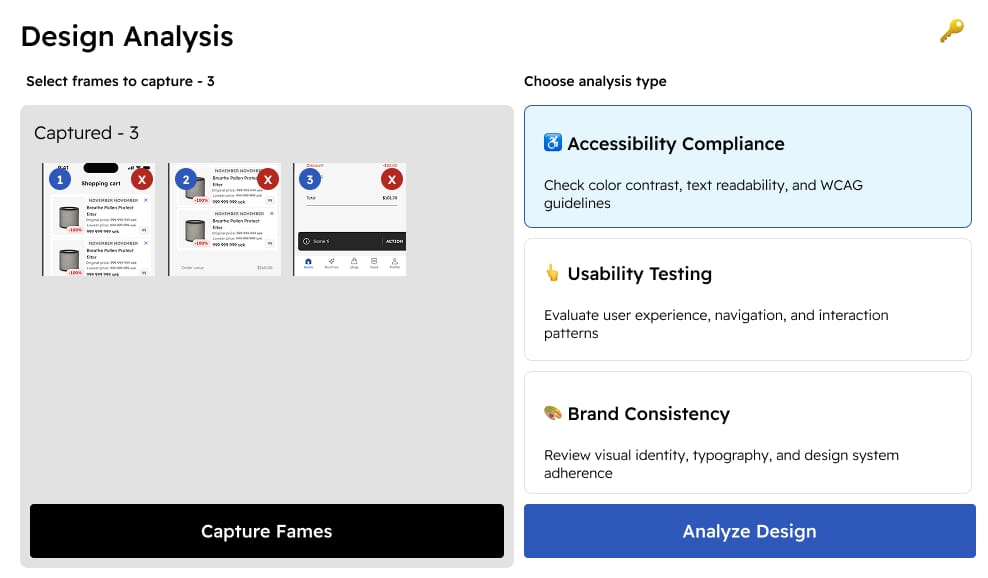

- Upload screens: Add design screens into the plugin

- Define test goals: Tell the system what you want to test (like talking to a real user researcher)

- Review test plan: The system creates a comprehensive test plan for approval

- Select participants: Choose from the generated pool of diverse personas

- Run testing: Get comprehensive usability insights in 2-3 minutes

- Iterate: Make changes and test again immediately

Current Impact

The plugin is currently being rolled out to our design team with strong positive feedback. Designers are using it for:

- Early concept validation: Testing rough ideas before investing in polish

- Accessibility checks: Catching accessibility issues during design, not after development

- Perspective diversity: Getting insights from personas they might not have considered

- Rapid iteration: Testing multiple design directions quickly

The system delivers what used to require days of coordination in minutes, enabling designers to test more frequently and make more informed decisions.

The Fun Factor

Because this is an internal tool, I added a Konami code easter egg that unlocks "Snoop Mode"—the user researcher agent starts speaking in the tone of Snoop Dogg. It's a small detail, but it shows that even serious productivity tools can have personality.

What Made This Work

Starting with learning: Taking time to understand multi-agent systems properly before building made the second iteration dramatically better than trying to hack together a complex single-agent system.

The pivot mindset: Being willing to completely rethink the approach when the lunch-time insight hit. Sometimes the best solution isn't an incremental improvement—it's a different approach entirely.

Building in Figma: Integrating directly into the designer's workflow rather than creating another tool they had to remember to use.

Iterative feedback: Getting early validation from user researchers helped guide the development and ensured I was building something people would actually use.

Ongoing Development

This is an active project that gets updated every couple of weeks based on designer feedback. The multi-agent architecture makes it easy to add new capabilities or adjust existing ones without rebuilding the entire system.

Future directions include integration with our component library for more contextual feedback and connections to our real user research data to make personas even more accurate.

Technical Architecture

Built using Claude Code for rapid prototyping and iteration, with a multi-agent architecture that separates concerns:

- Orchestration for workflow management

- Specialized agents for domain expertise (accessibility, personas)

- Figma API integration for seamless workflow integration

- Modular design for easy updates and improvements

TL;DR

Started building a Kanban automation for usability testing, but realized during lunch that a Figma plugin would enable testing earlier in the design process. Built a single-agent system first, got good feedback, then learned about multi-agent systems to create a sophisticated testing tool. Now designers can get comprehensive usability insights—including accessibility audits and diverse persona feedback—in 2-3 minutes instead of days. Currently rolling out to the design team with strong adoption.